AI, ML, DL, and NLP: An Overview

Today artificial intelligence (AI), machine learning (ML), deep learning (DL) and natural language processing (NLP) are all technologies that have become a part of the fabric of enterprise IT.

However, solutions providers and end-users often use these terms interchangeably. Even though there can be significant conceptual overlaps there are also important distinctions between these key technologies.

Here’s a quick overview of the definition and scope of each of these terms.

Artificial Intelligence (AI)

The term AI has been around since the 1950s and broadly refers to the simulation of human intelligence by machines. It encompasses several areas beyond computer science including psychology, philosophy, linguistics and others.

AI can be classified into four types, from simplest to most advanced, as reactive machines, limited memory, theory of mind and self-awareness.

Reactive machines: Purely reactive machines are trained to perform a basic set of tasks based on certain inputs. This AI cannot function beyond a specific context and is not capable of learning or evolving over time. Examples: IBM’s Deep Blue chess AI, and Google’s AlphaGo AI.

Limited memory systems: As the nomenclature suggests, these AI systems have limited memory to store and analyze data. This memory is what enables “learning” and gives them the capability to improve over time. In practical terms, these are the most advanced AI systems we currently have. Examples: self-driving vehicles, virtual voice assistants, chatbots.

Theory of mind: At this level, we are already into theoretical concepts that have not yet been achieved yet. With their ability to understand human thoughts and emotions, these advanced AI systems can facilitate more complex two-way interactions with users.

Self-awareness: Self-aware AIs with human-level desires, emotions and consciousness is the aspirational end state for AI and, as yet, are pure science fiction.

Another broad approach to distinguishing between AI systems is in terms of narrow or weak AI, specialized intelligence trained to perform specific tasks better than humans, general artificial intelligence (AGI) or strong AI, a theoretical system that could be applied to any task or problem, and artificial super intelligence (ASI), AI that comprehensively surpasses human cognition.

The concept of AI is continuously evolving based on the emergence of technologies that enable the most accurate simulation of human intelligence. Some of those technologies include ML, DL, and artificial neural networks (ANN) or simply neural networks (NN).

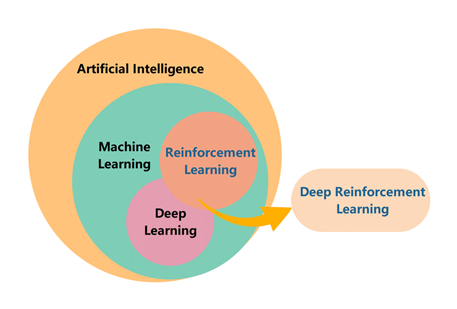

ML, DL, RL, and DRL

Here’s the TL;DR before we get into each of these concepts in a bit more detail: If AI’s objective is to endow machines with human intelligence, ML refers to methods for implementing AI by using algorithms for data-driven learning and decision-making. DL is a technology for realizing ML and expanding the scope of AI. Reinforcement Learning (RL), or evaluation learning, is an ML technique. And deep reinforcement learning (DRL) combines DL and RL to realize optimization objectives and advance toward general AI.

SOURCE: Researchgate

Machine Learning (ML)

ML is a subset of AI that involves the implementation of algorithms and neural networks to give machines the ability to learn from experience and act automatically.

ML algorithms can be broadly classified into three categories.

Supervised learning ML algorithms using a labelled input dataset and known responses to develop a regression/classification model that can then be used on new datasets to generate predictions or draw conclusions. The limitation of this approach is that it is not viable for datasets that are beyond a certain context.

Unsupervised learning algorithms are subjected to “unknown” data that has yet to be categorized or labelled. In this case, the ML system itself learns to classify and process unlabeled data to learn from its inherent structure. There is also an intermediate approach between supervised and unsupervised learning, called semi-supervised learning, where the system is trained based on a small amount of labelled data to determine correlations between data points.

Reinforcement learning (RL) is an ML paradigm where algorithms learn through ongoing interactions between an AI system and its environment. Algorithms receive numerical scores as rewards for generating decisions and outcomes so that positive interactions and behaviours are reinforced over time.

Deep Learning (DL)

DL is a subset of ML where models built on deep neural networks work with unlabeled data to detect patterns with minimal human involvement. DL technologies are based on the Theory of Mind type of AI where the idea is to simulate the human brain by using neural networks to teach models to perceive, classify, and analyze information and continuously learn from these interactions.

DL techniques can be classified into three major categories: deep networks for supervised or discriminative learning, deep networks for unsupervised or generative learning, and deep networks for hybrid learning that is an integration of both supervised and unsupervised models and relevant others.

Deep Reinforcement Learning (DRL) combines RL with DL techniques to solve challenging sequential decision-making problems. Because of its ability to learn different levels of abstractions from data, DRL is capable of addressing more complicated tasks.

Natural Language Processing (NLP)

What is natural language processing?

NLP is the branch of AI that deals with the training of machines to understand, process, and generate language. By enabling machines to process human languages, NLP helps streamline information exchange between human beings and machines and opens up new avenues by which AI algorithms can receive data. NLP functionality is derived from cross-disciplinary theories from linguistics, AI and computer science.

There are two main types of NLP algorithms, rules-based and ML-based. Rules-based systems use carefully designed linguistic rules whereas ML-based systems use statistical methods. NLP also consists of two core subsets, natural language understanding (NLU) and natural language generation (NLG).

NLU enables computers to comprehend human languages and communicate back to humans in their own languages. NLG is the use of AI programming to mine large quantities of numerical data, identify patterns and share that information as written or spoken narratives that are easier for humans to understand.

So there we have it, a quick rundown of some of the key technological acronyms that dominate the conversation today. You can also learn more about how AI/ML technologies are ushering in a new era of Intelligent Bioinformatics and autonomous drug discovery and the importance and challenges of NLP in biomedical research.

Curious about NLP? Dive deeper into our article for further exploration.

Subscribe to our blog: